When using optimization for designing high-performance products, buildings, or structures, putting the designer in the loop allows to take into account design features that are hard to quantify and turns optimization into an exploration tool.

With Caitlin Mueller

As part of my Master’s thesis at MIT, I created an interactive evolutionary optimization tool that piggy-backs on the capabilities of parametric CAD for geometry generation and provides users with a straightforward design exploration UI powered by a genetic algorithm. The tool, called stormcloud, was my first C# project. The main challenge was to work with and around the idiosyncrasies of the API of Grasshopper—the visual scripting environment on top of which stormcloud is built—since, at the time, the coding community focusing on that platform was fairly small and clear documentation was hard to find. This has fortunately changed for the better.

I built this project under the supervision of Prof. Caitlin Mueller. The tool can be downloaded as part of the Design Space Exploration (DSE) toolset—which relies in part on some of the features developed for stormcloud—on Food4Rhino, and the code is open-source and available here. It should be heavily refactored, mostly because I was an inexperienced programmer when I completed this project, and I plan on doing this as part of a larger push to open-source the code I have written during my time at MIT, which currently resides on my private MIT Github enterprise account.

The tool:

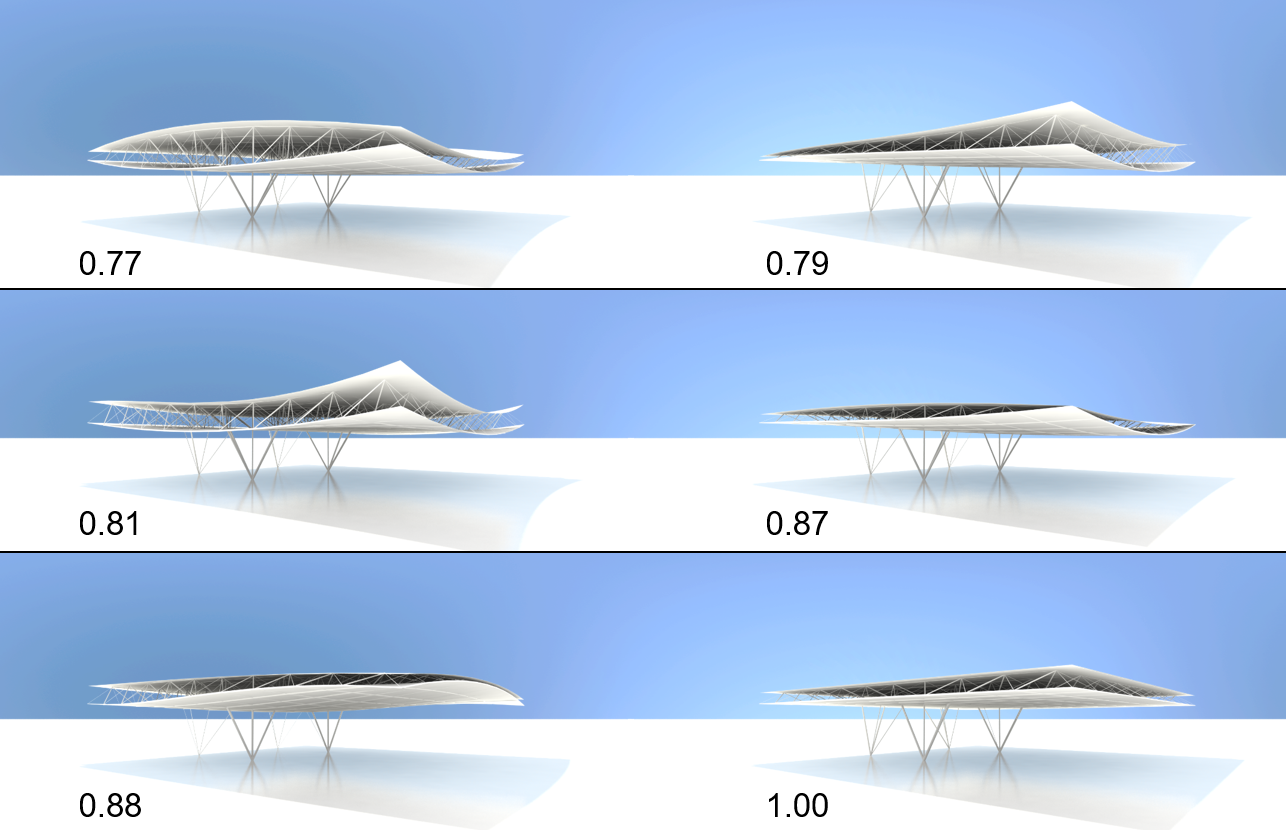

Here’s a quick demonstration of the tool on the design of a spatial truss roof, which is quantitatively evaluated based on its structural weight, itself computed from internal loads calculated using finite element analysis.

The video was recorded on one of the earlier versions of stormcloud. The UI has been slightly updated since then, and surfaces are now supported in the 3D viewport.

Evolutionary optimization may sound complicated, but it is simple from a conceptual standpoint. It is roughly inspired by Darwin’s theory of evolution and the concept of survival of the fittest. I am not an evolutionary biologist, but here’s the gist of it.

For every new generation, biological organisms undergo mutations, which may have positive, negative, or no consequences on their chances of survival. Presumably, individuals that undergo positive mutations will have a higher chance of surviving long enough to pass down their mutated genes to later generations, and, over time, individuals evolve to become more likely to survive. We can thus see evolution as a form of optimization for survival. This is of course simplistic, but it’s good enough to explain evolutionary optimization.

Evolutionary optimization seeks to tune the parameters of an objective/fitness function such that it is minimized or maximized. Here’s how a simple genetic algorithm works:

To clarify, a candidate solution is simply a vector or parameter values with a corresponding fitness value.

They amount to simple operations when applied on numerical parameters. Mutating a candidate consists in randomly perturbing its corresponding parameters from their values to produce a new candidate solution. Crossover is an operation that requires at least two candidates, which parameter values are randomly swapped to produce a new parameter vector.

Evolutionary optimization is versatile because it is black-box: it only requires the ability to compute the objective function for a given set of parameters. It does not need gradient information, and it is a global optimization scheme—it should not easily get stuck in a local optimum. Any drawbacks? It typically requires (many) more function evaluations than gradient-based optimization algorithms, resulting in longer runtimes. It typically does not offer convergence guarantees, and, because it is stochastic, it may return a different answer every time it is run. Mathematicians often do not like genetic algorithms because they are not rigorous and are not proved to converge.

Nevertheless, they’re useful to designers because:

If the objective function is not too computationally expensive to evaluate, I would recommend to first use a global optimization algorithm, such as a GA, to find the approximate location of the global optimum, and subsequently use that location as a starting point for a deterministic local optimization algorithm.

I have discussed above why GAs are useful for design, and, arguably, it has a lot to do with the fact that they’re black-box algorithms, which do not require us designers to focus on the nitty-gritty details of optimization and instead spend our time on the good stuff. The main disadvantage of this approach is that we hand over the design of our product to an optimization routine, which understands only one thing: numbers. This is problematic as most designers typically do not enjoy giving up agency of their design process, which may be one the reasons why optimization is not widely adopted in most industries.

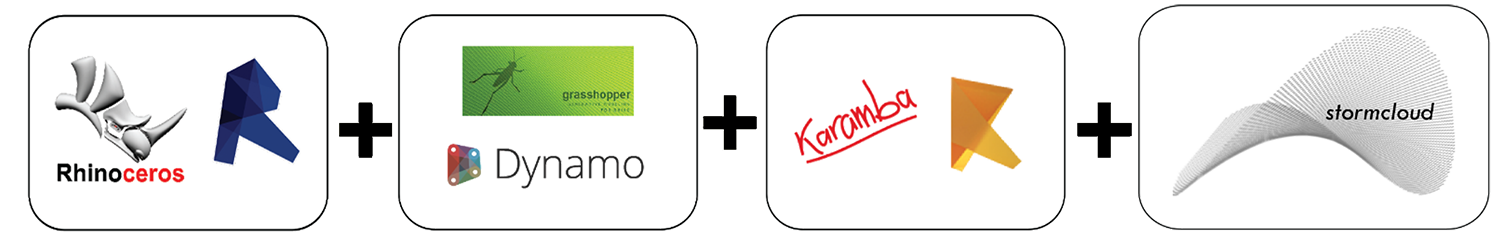

More than that, however, an optimization routine will only be able to navigate the design space based on the quantitative inputs it is provided—structural weight, strain energy, stress, energy consumption, daylighting level, volume. Unfortunately, there is a lot of unquantifiable aspects to design—aesthetics, functionality, spatial quality, manufacturability/constructability—that may be hard to condense into numbers but are easy to assess for an experienced designer. Therefore, it may be beneficial to include the designer in the optimization loop. To do so, there is no need to fundamentally change the genetic algorithm I presented above. Only the selection process for the best candidates is modified: instead of selecting the x best candidates based on their fitness values and using those as the basis for the next generation, the algorithm returns these same candidates to the human, who then chooses the best ones amongst those, which offers the possibility to take into account unquantifiable criteria. In addition, before generating new solutions, the human can tweak the mutation rate of the number of new solutions to be generated in order to boost or stifle the diversity of the next population.

Because of the human-computer dialogue involved with this process, this algorithm requires a UI that allows the human to evaluate the generated designs visually, which is what stormcloud sets out to do.

The tool required a few key features:

In addition to those requirements, simplicity drove the design of the UI, which now looks a tad dated and could use a little refresh, but it is still effective.

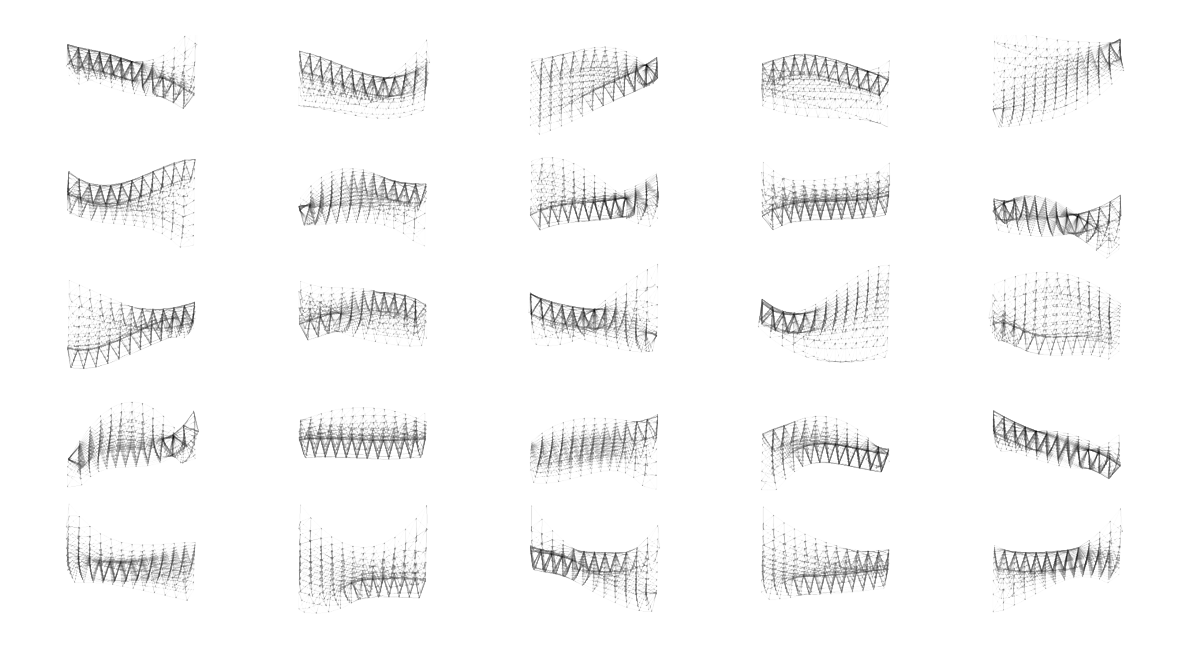

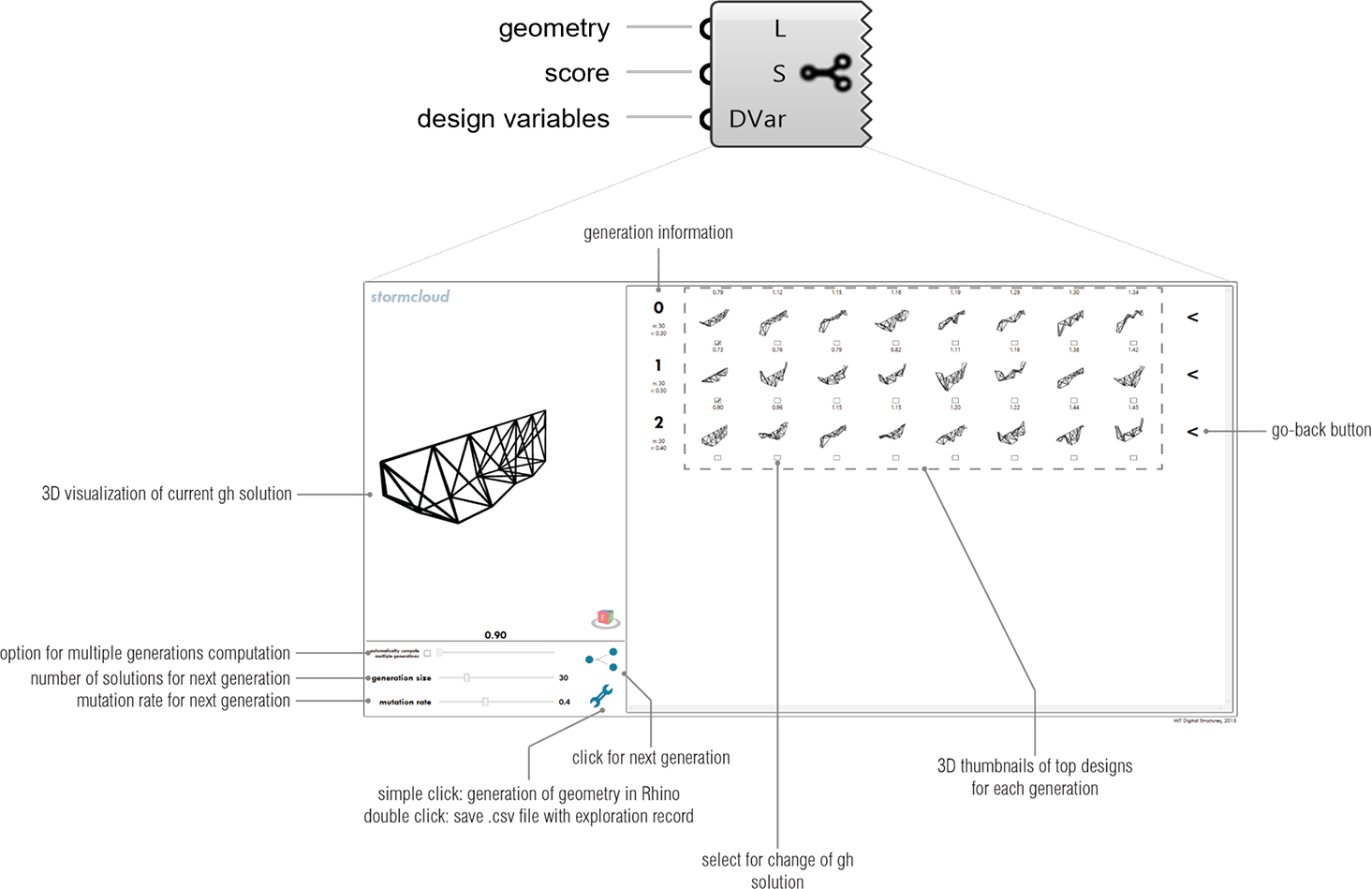

Interactive evolutionary optimization can help designers converge to a solution in a way that takes into account a performance metric and other, non-quantified features. However, more than that, it easily allows designers to create design catalogs, which are useful to quickly understand what the diversity of high-performance designs possible and to reveal unexpected solutions. They allow the development of new workflows where design is partly by shopping generated solutions (Balling, 1999; Xu et al., 2012) and refining them in later stages of the design process. In my opinion, this is powerful because of its potential for design inspiration. In that sense, the computer becomes a collaborator who generates ideas which may or may not be viable but are, at the very least, performing well.

Stormcloud:

If I were to update or redesign the tool from the ground up, I’d most likely implement it using Electron, which allows a desktop app to be coded with an HTML front-end, in order to integrate additional data visualization features through the use of powerful JavaScript libraries such as d3 and three.

This work was published at the IASS 2015 symposium in Amsterdam, featured in AD (Vol. 87, Autonomous Assembly), and presented at ABX (ArchitectureBoston Expo) 2015.